When I first visited ITP show back in 2005 or so, I realized that I am interested in physical computing and when going home from the show I came up with the idea of a project with ITP spirit – a window into a virtual world.

The idea was to use a notebook or a tablet PC with a compass and gyroscope contraption attached to it to browse 360 degree panoramas so the viewport of the panorama matches the direction of the screen. This way moving the viewport, user will be able to see the “virtual world on the other side of the portal”.

You can guess why I’m writing about this concept right now – because I couldn’t imagine back then that device like that would be available to consumers. And now I’m using it to type this post, yes, I’m talking about Apple iPad ;)

So, getting back to the concept – now there is no need for custom hardware which I was thinking is necessary 5 years ago and all is needed now is an iPad application.

OpenGL sphere with 360 panorama mapped to it as a texture plus some (probably sophisticated) logic to make device scroll the panorama based on accelerometers and compass.

Combine it with some sci-fi panoramas or with some real-estate panoramas and you have a cool product that blows peoples mind and gets all the blogging attention you need to make a first million in the app store.

Another app for real estate brokers that can go along with this one is panorama maker – just point you camera around the room (maybe in video mode) and augmented with direction and tilt data it can help automate panorama creation which broker (or “for sale by owner” enthusiast) just uploads to property’s site.

All that is a bit optimistic, obviously, as panorama creation uses some hardcore math and requires significant image processing and often manual involvement, but it can definitely be aided by dimensional data.

Let me know if anything like that already exists or if you’re interested to give it a try – I’d pay for such app for sure! Can do that in advance using kickstarter.com if you like ;)

Comments are always welcome! And don’t try to be easy on my feelings, tell me what wrong here ;)

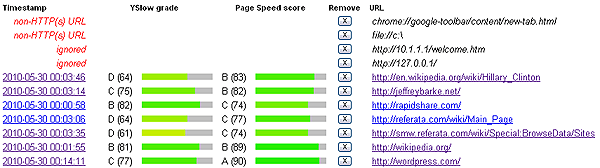

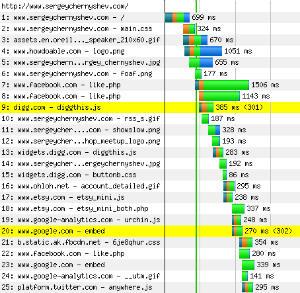

Now, when we have page URLs, we need to understand which assets to load from those pages and that can be solved by running headless Firefox with Firebug and

Now, when we have page URLs, we need to understand which assets to load from those pages and that can be solved by running headless Firefox with Firebug and