First, a short intro – I’ve been thinking of what to do with all the ideas I’m coming up and I’d like to try posting blog entries under “Concepts” category. I’ll accept comments and will write additions to the concept there as well. We’ll see what it’ll be like ;)

On my way home today, I was thinking again about asset pre-loading (example, example with inlining) after page on-load event (for faster subsequent page loads) for ShowSlow and realized that it can be created as a very good “easily installable with one line of JavaScript” service!

I think all the key components to this technology already exist!

First we need to know what to pre-load and here comes Google Analytics API and it’s Content / ga:nextPagePath dimension that will give us all the probabilities for next pages that users visit.

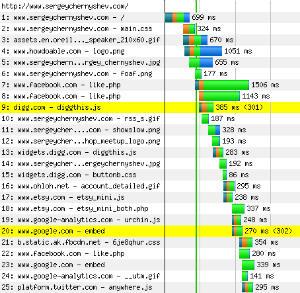

Now, when we have page URLs, we need to understand which assets to load from those pages and that can be solved by running headless Firefox with Firebug and NetExport extension configured to auto-fire HAR packages at beacons on the server.

Now, when we have page URLs, we need to understand which assets to load from those pages and that can be solved by running headless Firefox with Firebug and NetExport extension configured to auto-fire HAR packages at beacons on the server.

HAR contains all the assets and all kind of useful information about the contents of those files so tool can make infinitely complex decisions regarding picking the right URL to pre-load from simple “download all JS and CSS files” to “only download small assets that have infinite expiration set” and so on (this can be a key to company’s secret ingredient that is hard to replicate). This step can be done on a periodic basis as to run it in real time is just unrealistic.

The last piece is probably the most trivial – actual script tag that asynchronously loads the code from the 3rd party server with current page’s URL as parameter which in turn post-loads all the assets into hidden image objects or something to prevent asset execution (for JS and CSS).

So, all user will have to provide is site’s homepage URL and approve GA data import through OAuth. After that, data will be periodically re-synced and re-crawled for constant improvement.

Some additional calls on the pages (e.g. at the top of the page and at the end of the page) can measure load times to close feedback loop for asset picking optimization algorithm.

It can be a great service provided by Google Analytics themselves, e.g. “for $50/mo we will not only measure your site, but speed it up as well” – they already have data and tags in people’s pages, the only thing left is crawling and data crunching that they do quite successfully so far.

Anyone wants to hack it together? Please post your comments! ;)

Sergey,

do you have access to my brain?

This is weird: I wrote down exactly thic concept a few days ago!

Shall we exchange thoughts at Velocity conf and get this ball rolling?

– Aaron

I don’t know – what were you doing between 8PM and 8:30PM EST today? ;)

Let’s definitely chat at Velocity!

I’ll put my ideas on paper (Google Doc) before V conf, and share with you.

I’m not a huge fan of prefetching – mostly because the predictions of what the user will need next are weak and lead to wasteful downloads. I believe your proposal would span web sites – in other words, users on my web site foo.com might often go to bar.com. If there was an error or the predictions were faulty, this could cause foo.com to overload bar.com. We could restrict it to same site (and ignore the possibility of someone accidentally disabling this restriction). But then, if I own foo.com and am only going to prefetch resources from foo.com, I’d probably prefer to do a custom solution – specifically listing the resources to prefetch – rather than outsourcing that. I think the idea has a lot of potential, but am not 100% sold.

Yeah, I agree that wasteful downloads are going to happen, but I don’t see a better way to save the day here without human-powered perfection, and that would have to be done constantly, and people make mistakes too ;)

BTW, what do you think the overhead estimates would be? what if we limit only to “blockers” – JS (blocks downloads) and CSS (blocks rendering) and ignore heaviest stuff like images? I think it’s possible to find a best match if that feedback loop will include bandwidth costs (it can even calculate the cost of this optimization ultimately linking more sales to cost per customer acquisition which will include bandwidth overhead)

I think this kind of tool can be a good aid to human-powered system – after all, there is no problem giving user manual controls after automated system gave it’s suggestions.

Also, I think there is a huge class of sites that don’t have internal human resources to do this kind of analysis and their traffic levels don’t really forbid them having an overhead at the price of better conversions or higher page per visits.

By no means it solves all problem, of course – just a single piece of it.